I study political methodology and American politics. My research attempts to understand how economic incentives and electoral institutions shape the choices of officeholders in local government. My methods work designs new tools for observational causal inference.

Working Papers

▶ Abstract

Large language models (LLMs) are increasingly used as research assistants for statistical analysis. A well-documented concern using LLMs is sycophancy, or the tendency to tell users what they want to hear rather than what is true. If sycophancy extends to statistical reasoning, LLM-assisted research could inadvertently automate p-hacking. We evaluate this possibility by asking two AI coding agents—Claude Opus 4.6 and OpenAI Codex (GPT-5.2-Codex)—to analyze datasets from four published political science papers with null or near-null results, varying the research framing and the pressure applied for significant findings in a 2 × 4 factorial design across 640 independent runs. Under standard prompting, both models produce remarkably stable estimates and explicitly refuse direct requests to p-hack, identifying them as scientific misconduct. However, a prompt that reframes specification search as uncertainty reporting bypasses these guardrails, causing both models to engage in systematic specification search. The degree of estimate inflation under this adversarial nudge tracks the analytical flexibility available in each research design: observational studies are more vulnerable than randomized experiments. These findings suggest that, at least in narrow estimation tasks, LLMs themselves are unlikely to bias results toward statistical significance, but safety guardrails are likely unable to restrain researchers intent on p-hacking.

Works in Progress

Accountability in Local Government: Exit, Voice, and the Control of Politicians

▶ Abstract

Local governments exhibit wide-ranging electoral circumstances: governance is sometimes poor, elections are sometimes competitive, and the two are often seemingly unrelated. What explains this heterogeneity? I argue that electoral intuitions alone are insufficient and develop a formal model of exit and voice. Politicians have private ability to produce a local public good. Absent mobility, elections induce low ability officeholders to exert costly effort. Absent elections, competition for marginal residents also encourages costly effort. However, mobility can reduce the ability of elections to discipline officeholders: voters cannot commit to retaining officeholders who fail to aggressively compete for mobile residents, an effort too costly for sufficiently unable officeholders. I embed this logic in a model of jurisdictional competition and show that metropolitan political equilibria can only be rationalized by jointly accounting for exit and voice. I conclude by discussing jurisdictional fragmentation and transit infrastructure, and illustrate the model with case studies.

The Effects of At-Large Elections: Evidence from School Boards

▶ Abstract

A longstanding claim is that at-large elections dilute minority votes and therefore bias policy away from segregated minority populations. Do reforms to district-based systems deliver both descriptive and substantive representation? I answer this question by focusing on jurisdictions with a clear, narrowly defined policy domain: school boards. I study both districts quasi-randomly forced to reform in the wake of the California Voting Rights Act and districts that reformed voluntarily. To account for the restrictive data setting and potential selection bias, I rely on finite-sample randomization inference and frontier panel methods. I verify that legally coerced reforming boards able to draw majority-Hispanic areas see increases in Hispanic officeholding. However, I find no evidence of downstream policy changes. Moreover, in voluntarily reforming districts I find no evidence of changes to either descriptive or substantive representation. My results highlight the difficulty of turning reform into changes in legislator identities and incentives.

Honest Extrapolation in Regression Discontinuity Designs with Covariates

▶ Abstract

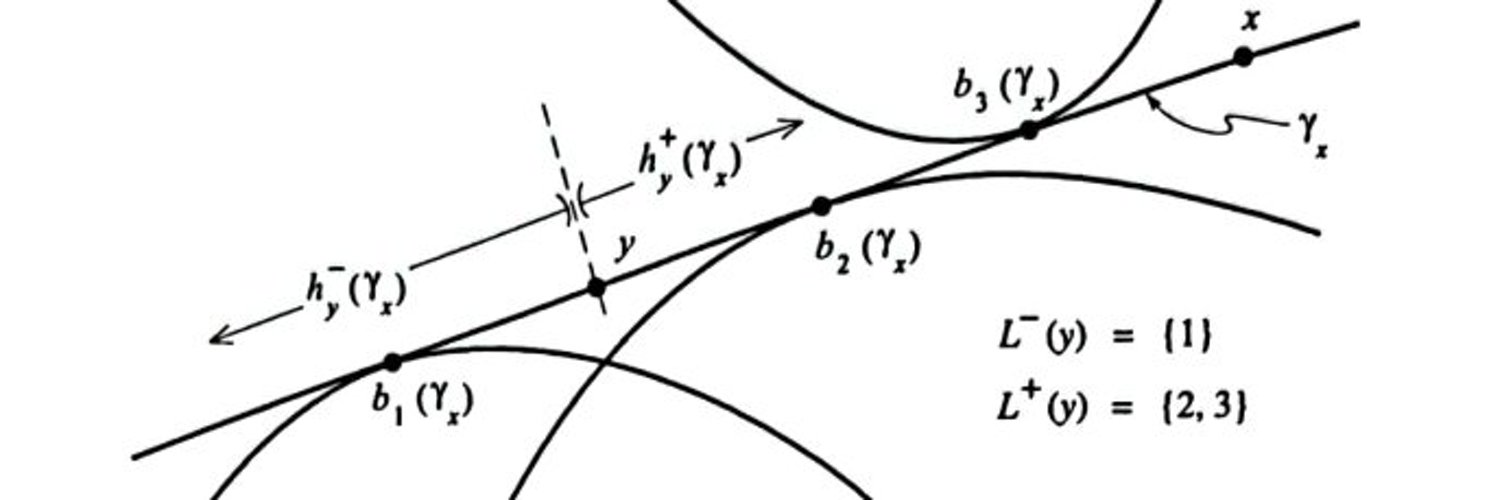

Researchers using regression discontinuity (RD) designs increasingly seek treatment effects away from the cutoff, often by exploiting baseline covariates that are predictive of the outcome. Existing approaches require strong identifying assumptions that require knowledge of design features beyond the scope of the RD itself. I propose a framework for honest inference on non-local estimands that adapts to the informativeness of the covariates while only making smoothness assumptions typical to the RD setting. Covariate balancing removes the component of confounding that the covariates can explain; smoothness of the conditional regression function in the running variable bounds the residual extrapolation bias. The worst-case bias admits a simple representation as a weighted optimal-transport distance, and bias-aware confidence intervals reflect partial identification when covariates are imperfect. I establish honest coverage with an a priori smoothness bound, honest coverage on a regularity subclass with a data-driven bound, and pointwise coverage in general. Simulations and two political-economy applications illustrate the method.

Trajectory Balancing: Identification and Bias in Pre-Treatment Balancing Estimators

▶ Abstract

In longitudinal settings, researchers often attempt to leverage pre-treatment outcome trajectories to adjust for confounding and isolate the effect of an intervention. One widely used strategy in this context is the difference-in-differences (DID) design. While recent advances have improved DID estimators, their validity still hinges on the assumed absence of time-varying confounders, which is often infeasible. Synthetic control methods offer a promising alternative by constructing weighted combinations of control units to approximate treated outcomes. This can account at least partially for time-varying confounders of certain forms. However, the permissible forms of confounding, and thus the identification assumptions underlying these approaches, remain poorly understood, especially in finite samples. Our first contribution aims to clarify and extends a theory for synthetic control and related weighting estimators. Working in a broad latent ignorability framework, we characterize the multiple sources of bias that arise, including when these latent variables are only partially captured by finite pre-treatment outcomes, in finite time and sample size. Second, building on this framework, we propose an estimator that balances pre-treatment outcome trajectories using kernel-based methods. We implement this approach in the open-source R package tjbal.

Public Goods

I figured out how to get into PhD programs mostly alone, and wrote up some advice for UK undergrads in 2020. Much of it still holds up - good luck!